An always popular question on the PostGIS users mailing list has been “how do I find the N nearest things to this point?”.

To date, the answer has generally been quite convoluted, since PostGIS supports bounding box index searches, and in order to get the N nearest things you need a box large enough to capture at least N things. Which means you need to know how big to make your search box, which is not possible in general.

PostgreSQL has the ability to return ordered information where an index exists, but the ability has been restricted to B-Tree indexes until recently. Thanks to one of our clients, we were able to directly fund PostgreSQL developers Oleg Bartunov and Teodor Sigaev in adding the ability to return sorted results from a GiST index. And since PostGIS indexes use GiST, that means that now we can also return sorted results from our indexes.

Which is a very long way of saying that PostGIS (the development code in the source repository) now has the ability to do index-assisted nearest neighbour searching.

This feature (the PostGIS side of it) was funded by Vizzuality, and hopefully it comes in useful in their CartoDB work.

You will need PostgreSQL 9.1 and the PostGIS source code from the repository, but this is what a nearest neighbour search looks like:

SELECT name, gid

FROM geonames

ORDER BY geom <-> st_setsrid(st_makepoint(-90,40),4326)

LIMIT 10;

Note the magic <-> operator in the ORDER BY clause. This is where the magic occurs. The <-> is a “distance” operator, but it only makes use of the index when it appears in the ORDER BY clause. Between putting the operator in the ORDER BY and using a LIMIT to truncate the result set, we can very very quickly (less than 10ms on a 2M record table, in this case) get the 10 nearest points to our test point.

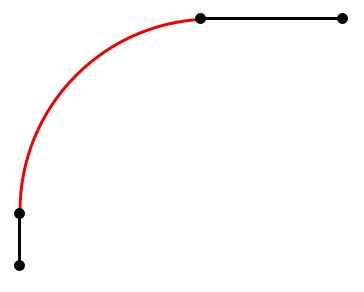

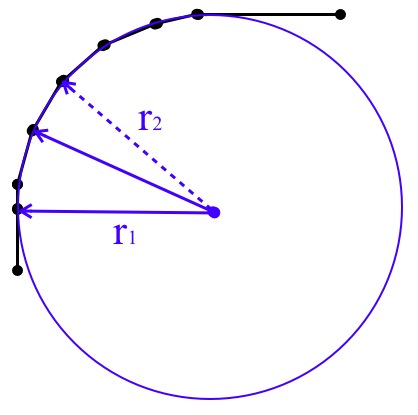

“It can’t possibly be this easy!!” You’re right. It can’t. Because it is traversing the index, which is made of bounding boxes, the distance operator only works with bounding boxes. For point data, the bounding boxes are equivalent to the points, so the answers are exact. But for any other geometry types (lines, polygons, etc) the results are approximate.

There are actually two different approximations available for you to chose from.

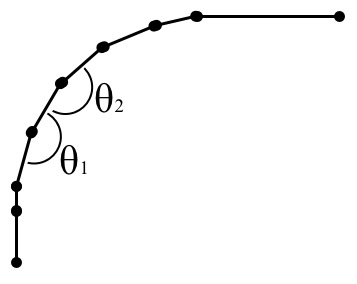

- Using the

<-> operator, you get the nearest neighbour using the centers of the bounding boxes to calculate the inter-object distances.

- Using the

<#> operator, you get the nearest neighbour using the bounding boxes themselves to calculate the inter-object distances.

In general, because the box calculations are approximations of calculations on the objects themselves, getting a more exact “nearest N objects” is going to require a two-phase query, where the first phase grabs a larger candidate set, and the second phase does an exact test (just like all the other index-assisted predicates). So, for example:

with index_query as (

select

st_distance(geom, 'SRID=3005;POINT(1011102 450541)') as distance,

parcel_id, address

from parcels

order by geom <#> 'SRID=3005;POINT(1011102 450541)' limit 100

)

select * from index_query order by distance limit 10;

The indexed query pulls the 100 nearest objects by box distance, and the second query pulls the 10 actual closest from that set.